UK Online Safety Act: What Image Sharers Need to Know

Most people have heard of the Online Safety Act. Fewer know what it actually does. And almost nobody knows how fast it has moved in the last six months.

Here is the short version: the Act became law in October 2023. For most of 2024, it was still being implemented — Ofcom was writing codes of practice, consulting with industry, setting timelines. The law existed, but enforcement was largely theoretical.

That changed in March 2025, when the first duties came into force. It changed again in July 2025, when child safety obligations kicked in. It changed again in January 2026, when cyberflashing became a priority offence. And it changed again in February 2026, when AI-generated intimate images were explicitly criminalised.

By March 2026, Ofcom had opened over 80 investigations, issued fines to real platforms, and opened a formal investigation into two image board services specifically for failing their obligations under the Act. The regulator fined 4chan £520,000. It fined a pornography company £1 million. It fined Kick £800,000.

This is not theoretical anymore. It is happening right now, to real platforms, for things that matter directly to anyone who shares, uploads, or receives images online in the UK.

This guide explains what has changed, why it matters, and what you can actually do with these new rights.

What the Online Safety Act Actually Is — and Who It Covers

The Online Safety Act 2023 is UK legislation that places a legal duty of care on online platforms. It requires any service where users can share content with each other — social media, image hosts, forums, messaging apps, dating sites, file-sharing services, gaming platforms — to actively protect users from illegal content rather than simply responding to complaints after the fact.

The shift from reactive to proactive is the key change. Under the old framework, platforms were largely protected from liability as long as they took down content when notified. Under the Online Safety Act, platforms must assess the risk of harmful content appearing on their service, implement systems to prevent it, and demonstrate to Ofcom that those systems work.

The Act applies to any user-to-user service where content generated, uploaded or shared by one user may be read, viewed, heard, or otherwise experienced by another user. Content includes written material or messages, oral communications, photographs, videos, visual images, music and data of any description.

That definition covers a lot. If you use any service that lets you share a photo with another person — including messaging apps, image hosts, Discord servers, Reddit, and any image-sharing platform — the Act covers the platform you are using.

The duty of care applies globally to services with a significant number of UK users, or which target UK users, or those which are capable of being used in the UK, where there are reasonable grounds to believe that there is a material risk of significant harm to individuals in the UK.

This matters because it means the Act is not limited to UK-registered companies. A US-based image board with millions of UK users is covered. A Greek image-sharing platform used predominantly by UK audiences is covered. The Act follows the audience, not the company address.

The Timeline: What Has Already Happened

Understanding what rights you have requires understanding when each layer of the Act came into force. The implementation has been phased, and several significant moments have already passed.

- October 2023 — Royal Assent: The Act became law. Nothing changed immediately for users or platforms — Ofcom then began the work of turning the Act into enforceable codes of practice.

- January 2024 — Criminal offences live: The Act’s new criminal offences came into effect. Sharing intimate images without consent — including threatening to share them — became a criminal offence under the Online Safety Act framework, in addition to existing law. Cyberflashing (sending unsolicited sexual images) became a criminal offence in England and Wales.

- March 2025 — Illegal harms duties enforceable: As of 17 March 2025, platforms have a legal duty to protect their users from illegal content online. Ofcom is actively enforcing these duties and has opened several enforcement programmes to monitor compliance. This is the point at which the law went from existing to being enforced.

- July 2025 — Child safety duties: As of 25 July 2025, platforms have a legal duty to protect children online. Platforms are now required to use highly effective age assurance to prevent children from accessing pornography, or content which encourages self-harm, suicide or eating disorder content.

- January 8, 2026 — Cyberflashing becomes a priority offence: Under new regulations that came into force on this date, cyberflashing — sending, sharing or threatening to share intimate images — was elevated from a criminal offence to a priority offence under the Act. Making it a priority offence means that regulated online platforms now have to remove such content from their services when they become aware of it and take steps to prevent it from appearing in the first place. This is the difference between a platform removing content after a report and a platform being legally required to detect and block it proactively.

- February 6, 2026 — Deepfakes criminalised: The Data (Use and Access) Act 2025 came into force, creating criminal offences for both creating and requesting the creation of intimate images of an adult without consent, including AI-generated deepfakes. Creating a deepfake of someone in an intimate context, without their consent, is now explicitly illegal in the UK, regardless of whether you share it.

- February 19, 2026 — 48-hour takedown rule announced: The government announced an amendment to the Crime and Policing Bill that will require regulated service providers to take down non-consensual intimate images within 48 hours of being notified of them. Failure to comply will be subject to the Online Safety Act penalty regime — up to 10% of worldwide turnover and a potential block on the service.

- March 6, 2026 — Image board investigation opened: Ofcom opened an investigation into the provider of two image board services for failing to comply with their duties under the Online Safety Act, including requirements to prevent individuals from encountering non-consensual intimate images and CSAM.

- March 2026 — 4chan fined £520,000: Ofcom issued a total of £520,000 in fines to 4chan for failing to implement age verification, failing to assess the risk of illegal content, and failing to explain in its terms of service how it protects users from criminal content.

The pace of this is worth noting. In the space of roughly six months between July 2025 and March 2026, the Act went from theoretical to actively enforced, with real money changing hands and real investigations opened into image-sharing platforms specifically.

What This Means for the Platforms You Use

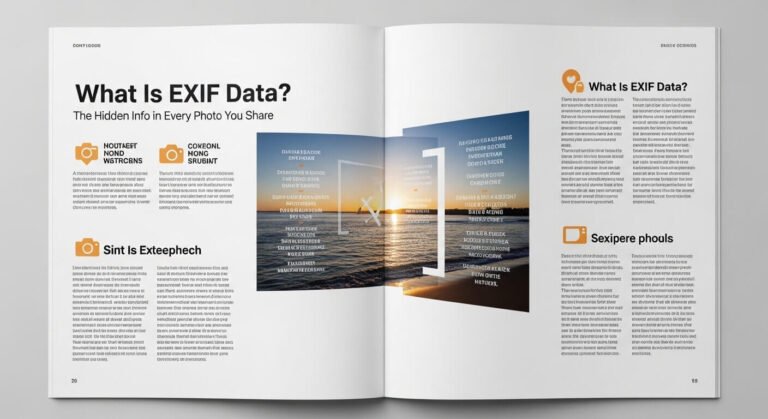

The practical impact of the Online Safety Act on the image-sharing platforms most relevant to former ChatPic users has been significant — and in one notable case, resulted in a major platform choosing to leave the UK market rather than comply.

- Imgur blocked UK access: When Ofcom issued compliance requirements to Imgur, the platform’s response was to block access for UK users rather than implement the required measures. This has left UK users unable to access one of the most widely used anonymous image hosts without a VPN. It is a direct consequence of the Act’s enforcement.

- 4chan fined and in active dispute: Ofcom fined 4chan £450,000 for failing to prevent children from accessing adult content, £50,000 for failing to assess the risk of illegal material, and £20,000 for failing to explain in its terms of service how it protects users from criminal content. 4chan has declined to pay and filed legal action in a US federal court challenging Ofcom’s jurisdiction. The standoff is ongoing.

- File-sharing services under active monitoring: Ofcom is currently monitoring the measures being taken by providers of file-sharing and file-storage services that present risks of harm to UK users from image-based child sexual abuse material as part of its enforcement programme. This monitoring programme specifically covers services used to distribute illegal images at scale, which is precisely the category that ChatPic’s successor sites occupy.

- Anonymous image hosts face new scrutiny: The Act’s requirements apply to any platform accessible to UK users. An image host that allows users to upload and share images without moderation, without age assurance, and without a risk assessment is in potential breach of the Act, regardless of where it is hosted.

The direct implication for users looking for a ChatPic replacement in 2026 is that the legal landscape has shifted significantly. Platforms that operated the way ChatPic did — with no moderation, no compliance infrastructure, and no accountability — now face fines and potential access blocks if they serve UK users. Some have already responded by blocking UK access rather than complying. Others are operating in breach of the Act and may face enforcement action.

What Rights You Now Have as a UK User

This is where the Act becomes directly useful rather than just legally interesting.

The Right to Have Illegal Content Removed Quickly

Under the Act, every regulated platform must remove illegal content when they become aware of it. For image-sharing platforms, this includes non-consensual intimate images, images involving minors, and images used in targeted harassment or threatening communications.

This is meaningfully different from the pre-Act position, where platforms could technically acknowledge a report and take weeks to act. The Act creates a legal duty, and Ofcom can investigate and fine platforms that fail to meet it.

If a platform is taking unreasonable time to remove illegal content involving your images, you can report that to Ofcom directly at ofcom.org.uk. This is a new route that did not exist before the Act.

The Right to Have NCII Removed Within 48 Hours

Once the Crime and Policing Bill amendment passes — expected shortly — platforms will face a legal deadline. Notify a regulated platform about a non-consensual intimate image, and they will have 48 hours to remove it, or face fines of up to 10% of their worldwide turnover.

In practice, the Revenge Porn Helpline (0345 6000 459) remains the fastest route to removal because they work directly with platforms’ trust and safety teams. But the 48-hour legal requirement creates a backstop when direct reporting fails.

The Right to Report Platforms to Ofcom

Users can now escalate non-compliance directly to Ofcom. If a platform you have reported illegal content to has failed to act, you can report that failure at ofcom.org.uk/online-safety. Ofcom’s enforcement track record in 2025 and 2026 shows they are actively following up.

Ofcom has strong enforcement powers, including the ability to investigate non-compliance, impose fines of up to 10% of qualifying worldwide revenue, and, in the most serious cases of non-compliance, apply to the courts to block services from the UK.

The Right to Clear Reporting Mechanisms

Under the Act, every regulated platform must provide users with clear and accessible ways to report problems. If an image-sharing platform you are using has no visible report mechanism, or has one that is deliberately difficult to find, that is a potential Act violation you can raise with Ofcom.

The Right to Age Assurance on Pornographic Platforms

If you are concerned that a platform is allowing children to access pornographic or severely harmful content, you can report this to Ofcom. Since July 2025, platforms hosting such content are legally required to implement highly effective age assurance. Ofcom has already fined multiple platforms for failing this test.

What the Act Does Not Do — Being Honest About Its Limits

The Act’s limitations are worth understanding clearly, because some of the coverage around it has overpromised.

- It does not make anonymous image sharing illegal: Sharing images anonymously remains entirely legal for users. The Act’s obligations are on platforms, not on the individuals who use them. You are not required to create an account, verify your identity, or attach your name to image uploads by virtue of this legislation.

- It does not give Ofcom direct power to prosecute individuals: The Act’s criminal offences — sharing intimate images without consent, cyberflashing, creating deepfakes — are prosecuted through the standard criminal justice system, not by Ofcom. Ofcom regulates platforms. The police handle individual criminal behaviour.

- It does not guarantee fast action on every report: Despite stronger legal protections, charging rates remain low in cases involving non-consensual intimate images. This is primarily because, as a relatively new and complex crime, police are still not properly investigating it or collecting sufficient evidence. The law has improved significantly. Enforcement by law enforcement agencies has not yet kept pace with the legislation.

- It does not reach every platform equally: 4chan has refused to pay a penny of its £520,000 in fines and has filed legal action in a US federal court challenging Ofcom’s jurisdiction. Platforms willing to simply ignore UK law and accept they cannot operate openly in the UK continue to exist and remain accessible via VPN. The Act is powerful within its reach — that reach has limits.

- It does not cover services based entirely outside the UK that have no UK commercial relationship: The Act applies to services with significant UK user numbers or that target the UK market. A small image-sharing forum with no UK commercial presence and low UK traffic occupies a greyer area, though Ofcom has shown willingness to pursue extraterritorial enforcement.

The Connection to ChatPic — Why This Matters for This Site’s Audience

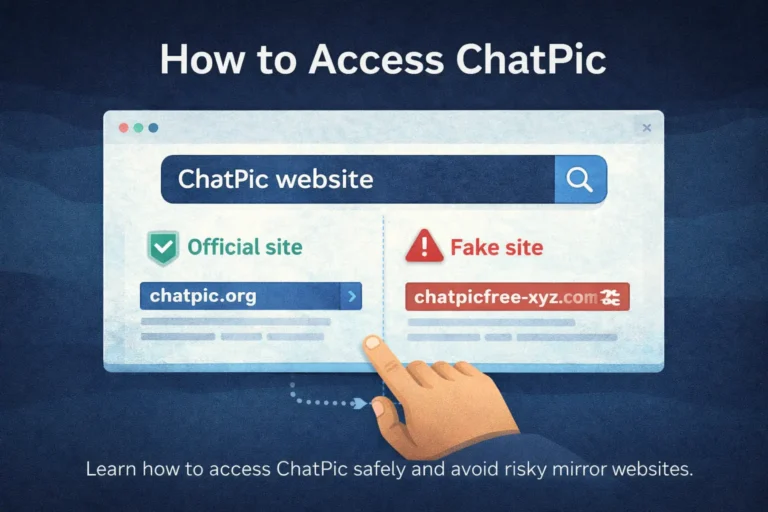

ChatPic closed in November 2023 — just weeks after the Online Safety Act received Royal Assent. The timing is not a coincidence. The platform was already under investigation and under infrastructure pressure from hosting providers and payment processors responding to legal exposure. The Act, had it been fully enforced at the time, would have made ChatPic’s business model illegal within the UK immediately.

The platforms that have since attempted to fill the gap ChatPic left — using its name, its design, its user base — now operate in an environment where the legal framework ChatPic evaded is actively enforced. Mirror sites that allow unmoderated image uploads to UK users without any compliance infrastructure are not simply operating in a grey area. They are operating in breach of UK law.

This matters for users choosing where to share images in 2026. A platform without a visible risk assessment, without an accessible reporting mechanism, and without any stated moderation policy is not just ethically questionable — it is in a structurally weaker legal position than it was two years ago, and platforms in that position are increasingly choosing between compliance and blocking UK access.

What to Do Right Now

If you share images online and you want to understand how the Act affects you practically, the most useful actions are straightforward.

Check that the platforms you use have an accessible reporting mechanism. The Act requires it. If a platform has no clear way to report illegal content, that is a compliance signal worth noting.

If your image has been shared without your consent on any regulated platform, report it directly to the platform first, then to the Revenge Porn Helpline on 0345 6000 459 if it is intimate in nature. If the platform fails to act, escalate to Ofcom at ofcom.org.uk/online-safety.

If you are evaluating a new image-sharing platform and want to assess its compliance posture, look for: a published privacy policy, a terms of service that addresses illegal content, a visible content report mechanism, and evidence of active moderation. These are the minimum baseline the Act requires of regulated services. A platform missing all four is almost certainly non-compliant.

Conclusion

The Online Safety Act 2023 is the most significant change to UK internet regulation in a generation. For anyone who shares images online, it has created new criminal offences, new platform obligations, new enforcement powers, and new rights that did not exist before January 2024.

Its enforcement is imperfect, and its limits are real. But platforms are being fined, investigations are open, and the regulatory pressure on image-sharing services is measurably higher in March 2026 than it was a year ago.

The platforms that survived ChatPic’s collapse were, by and large, the ones that already had compliance infrastructure in place. The platforms that emerged to replace it without that infrastructure now face an enforcement environment that ChatPic itself never had to navigate. The lesson of ChatPic was that simplicity without accountability does not scale. The Online Safety Act is making that lesson expensive.

Frequently Asked Questions

Does the Online Safety Act affect me personally as a user?

The Act’s direct obligations are on platforms, not individual users. It does not require you to verify your identity or create an account to share images. However, it creates new criminal offences — including sharing intimate images without consent and creating deepfakes — that apply to individuals, prosecuted through the standard criminal justice system rather than by Ofcom.

What can I actually do if a platform refuses to remove my image?

Report to the platform first. If they fail to act, report to the Revenge Porn Helpline (0345 6000 459) for intimate images, or directly to Ofcom at ofcom.org.uk/online-safety for any regulated platform failing its obligations. Ofcom can investigate, fine, and in serious cases seek a court order to block the platform in the UK.

Does the Act apply to platforms based outside the UK?

Yes, if they have a significant number of UK users or target the UK market. The duty of care applies globally to services with a significant number of UK users, or which target UK users, or those which are capable of being used in the UK, where there are reasonable grounds to believe that there is a material risk of significant harm to individuals in the UK. Ofcom has already issued fines to US-based platforms and opened investigations into international services.

What is the difference between a criminal offence and a priority offence under the Act?

A criminal offence means that an individual who commits the act can be prosecuted. A priority offence under the Act means platforms must proactively detect and prevent the content from appearing — not just remove it after a report. Cyberflashing and sharing non-consensual intimate images are both criminal offences and priority offences as of January 2026, meaning platforms bear an active prevention duty, not just a removal duty.

Is anonymous image sharing still legal in the UK?

Yes. The Act regulates platforms, not users. Sharing images anonymously remains legal. What changed is the obligations on the platforms hosting those anonymous uploads — they must now assess the risk of illegal content, implement systems to prevent it, and demonstrate compliance to Ofcom. Some have responded by blocking UK users rather than complying.

What happened to Imgur under the Online Safety Act?

Imgur chose to block access for UK users rather than implement the compliance measures Ofcom required. UK users attempting to access the platform now see a block page. The platform remains accessible via VPN, though using a VPN to circumvent a deliberate access restriction sits in contested territory. PostImage and ImgBB remain accessible in the UK and are the recommended alternatives for anonymous image sharing.

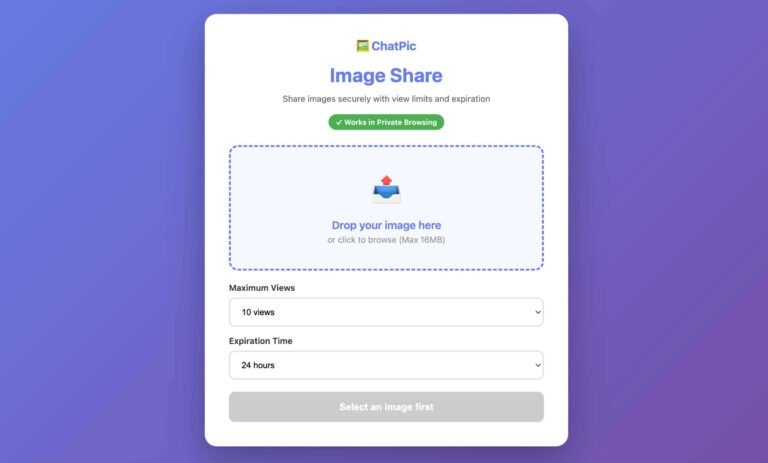

Call to Action

If your image has been shared without your consent and you need help getting it removed, see our guide to your rights under UK law when a photo is shared without permission. For understanding what data is embedded in the images you share, read our guide on EXIF data and photo privacy. For an explanation of how ChatPic’s own failure to comply with these standards contributed to its closure, read why ChatPic really shut down.