S-NISQ Quantum Error Correction: What It Is, Why It Matters

Introduction

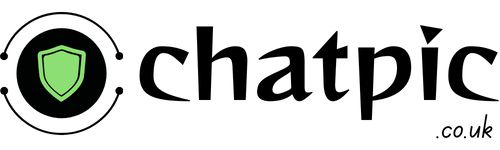

S-NISQ Quantum Error Correction Scalable Noisy Intermediate-Scale Quantum refers to the transitional era in quantum computing where devices begin demonstrating meaningful quantum error correction at scale, operating below the fault-tolerance threshold without yet achieving full fault-tolerant quantum computation. In practical terms, S-NISQ devices produce logical qubits with lower error rates than their constituent physical qubits — a milestone that pure NISQ hardware cannot reach. Google’s Willow processor crossed this line in late 2024 with 105 qubits, and the field has not looked the same since.

Why the S-NISQ Era Matters More Than Most Quantum Headlines

Quantum computing has generated more ambitious headlines than verifiable results for most of the past decade. S-NISQ matters because it marks the first time hardware evidence supports theoretical claims about error correction at scale.

The significance runs deeper than publication counts. For years, quantum error correction existed primarily as theoretical architecture — stabilizer codes, surface codes, and LDPC codes that worked on paper but faced overwhelming overhead in hardware. The S-NISQ Quantum Error Correction transition is the point where the overhead starts becoming manageable. Devices are now demonstrating that adding more physical qubits actually reduces logical error rates, rather than amplifying noise. That specific inflection point — called below-threshold operation — is the technical foundation of everything S-NISQ represents.

What Quantum Error Is and Why It Is the Central Problem

To understand S-NISQ quantum error correction, start with why quantum errors exist at all.

A classical computer bit holds a 0 or a 1. Environmental interference — heat, vibration, electromagnetic noise — might flip it occasionally, but error rates are vanishingly small and easily managed. A quantum bit, or qubit, holds a superposition of 0 and 1 simultaneously. Any interaction with the environment — any measurement, any stray electromagnetic field, any thermal fluctuation — collapses that superposition. This is quantum decoherence, and it happens fast.

In current superconducting quantum processors, qubits maintain coherent states for roughly 100–1,000 microseconds before decoherence corrupts the computation. Google’s Willow chip extended this to 100 microseconds per qubit cycle — a fivefold improvement over its predecessor — which sounds modest but represents enormous progress. Most useful quantum algorithms require many more gate operations than current coherence times allow before errors accumulate beyond correction.

The standard answer to this problem is quantum error correction: encoding one logical qubit across many physical qubits so that errors affecting a subset of the physical layer can be detected and corrected without disturbing the protected logical information. Early estimates required 1,000 to 10,000 physical qubits per logical qubit. The S-NISQ era is defined in part by research that is compressing that overhead ratio toward the practical range.

NISQ vs S-NISQ vs FTQC — The Honest Comparison

The three-stage framework for quantum computing progress is the clearest lens for understanding where S-NISQ fits. Most explanations of this topic collapse two of the three stages together, which produces confusion about what has actually been achieved.

| Feature | NISQ | S-NISQ | FTQC |

|---|---|---|---|

| Qubit count | 50–1,000 physical | 100–10,000 physical | 1M+ physical |

| Error correction | None or minimal | Below-threshold, partial | Full logical qubit correction |

| Logical qubits | Not achievable | Early demonstrations | Full-scale logical computation |

| Error rate per gate | ~0.1–1% | ~0.01–0.1% | <0.001% (logical) |

| Key milestone | Quantum supremacy demos | Below-threshold operation | Fault-tolerant algorithms |

| Practical usefulness | Limited, hybrid algorithms | Growing, specific domains | General computational advantage |

| Current status (2026) | Surpassed | Active transition | 2030+ roadmap |

| Representative hardware | IBM Eagle (2021) | Google Willow, Quantinuum H2 | Not yet achieved |

NISQ devices demonstrated that quantum hardware could scale in qubit count but remained limited by error rates that accumulated faster than corrections could manage. The defining feature of NISQ-era research was not error correction but error mitigation — statistical techniques for reducing the effect of errors after the fact rather than preventing them during computation.

S-NISQ Quantum Error Correction crosses the qualitative boundary where actual error correction outpaces error accumulation. The term itself is not universally standardised — some researchers still use NISQ as a broad category — but the technical milestone it describes is real and measurable: below-threshold operation, where increasing the code distance of a surface code reduces logical error rates rather than adding noise.

FTQC — fault-tolerant quantum computing — remains the long-horizon target. IBM has committed to building Starling, a 200 logical-qubit system, by 2028. Google’s roadmap targets a 1-million physical qubit fault-tolerant computer by 2030. These are engineering targets, not guarantees.

Quantum Error Correction Techniques in the S-NISQ Era

Three error correction approaches dominate current S-NISQ research. Understanding the differences matters because each company has made a different hardware bet.

Surface Codes

Surface codes arrange qubits on a 2D grid and detect errors through stabilizer measurements — mathematical checks on the state of qubit clusters that reveal error syndromes without directly measuring (and thereby collapsing) the qubits themselves. Google’s Willow processor implements surface codes with exponential error suppression: each increase in code distance produced a 2.14x improvement in error rate reduction, confirming below-threshold scaling for the first time on a physically relevant system.

Surface codes require significant physical qubit overhead — roughly 1,000 physical qubits per logical qubit at current error rates — but their 2D connectivity requirement makes them well-matched to superconducting qubit architectures. They remain the most experimentally mature approach to quantum error correction as of 2026.

qLDPC Codes

Quantum Low-Density Parity-Check codes offer a more efficient path to logical qubits because they encode more logical qubits per physical qubit than surface codes. IBM switched to qLDPC codes in 2024 following its transition from the Eagle and Heron processors toward modular “Quantum System Two” architectures. According to Riverlane’s QEC analysis, other hardware companies are expected to follow IBM’s move to qLDPC in 2026.

The tradeoff is connectivity: qLDPC codes require long-range qubit interactions that are difficult to achieve in superconducting hardware without significant engineering overhead. IBM’s modular approach is designed to address this by distributing the connectivity requirement across connected modules rather than a single chip.

Stabilizer Codes and Trapped-Ion Systems

Quantinuum, using trapped-ion hardware, demonstrated 50 entangled logical qubits with two-qubit logical gate fidelity exceeding 98% in 2025 — a milestone that represents the most advanced fault-tolerant capability achieved on a commercial system to date. Ion trap systems offer ultra-high gate fidelity above 99.9% and long coherence times, at the cost of slower gate speeds compared to superconducting systems. The trapped-ion approach to quantum error-correction conditions is particularly strong because the native gate fidelity starts high, reducing the number of physical qubits required to reach below-threshold operation.

What Most People Get Wrong About S-NISQ Progress

The most common misreading of S-NISQ advances is treating below-threshold operation as equivalent to fault-tolerant quantum computing. They are not the same.

Below-threshold operation means that the hardware has crossed the engineering threshold where more qubits improve rather than worsen logical error rates. It does not mean error rates are low enough to run practically useful fault-tolerant algorithms. Breaking RSA-2048 encryption via Shor’s algorithm, for example, requires millions of logical qubits running a circuit of enormous depth. Current S-NISQ hardware operates at 50–200 logical qubits with error rates that are improving but not yet at the level required for cryptographically relevant computation.

The second misreading is the “Quantum Winter” framing the argument that since useful fault-tolerant computers are still years away, current progress is overhyped. This misses the point of the S-NISQ transition. The valuable insight from 2025 hardware progress is not that useful quantum computers exist today. It is that the below-threshold milestone, which many sceptics argued might not be achievable with current qubit technology, has been demonstrated. That removes one of the core engineering risk factors from the long-term roadmap.

The third misreading — particularly common in investor-facing communication — is conflating quantum volume and algorithmic qubit metrics with actual computational advantage. Quantum volume is a useful benchmark but it measures device quality, not problem-solving capability. A device with high quantum volume may still be outperformed by a classical GPU on any practically useful problem. Honest S-NISQ evaluation requires separating hardware milestones from application benchmarks.

The Insight That Most Quantum Articles Miss

Here is what almost no explainer article covers: the S-NISQ transition creates an asymmetric skills gap that may become the primary bottleneck for the field.

According to Riverlane’s 2025 QEC landscape analysis, there are only an estimated 600 to 700 quantum error correction specialists worldwide. For context, the global quantum computing workforce is estimated at 20,000 to 30,000 people. QEC specialists — those with the specific expertise to design, implement, and optimise error correction protocols for real hardware — represent roughly 2 to 3% of that total.

As S-NISQ hardware becomes the standard deployment tier, demand for QEC expertise will scale with device production. IBM, Google, Quantinuum, IonQ, and the growing number of national quantum programmes (DARPA’s Quantum Benchmarking Initiative, the US Department of Energy’s Genesis Mission, UK and EU national programmes) all require QEC specialists. The supply pipeline — PhD programmes, postdoctoral research, industry training — cannot grow at the rate hardware is scaling.

This skills constraint matters for anyone building quantum strategy in 2026. The hardware milestones are advancing on schedule. The human capital to operate and optimise S-NISQ devices is the binding constraint that almost every roadmap underestimates.

A Real Scenario: Choosing Between NISQ Algorithms and S-NISQ Hardware

A pharmaceutical research team in early 2024 needed to model protein folding dynamics for a drug discovery project. Their options: run hybrid classical-quantum algorithms on existing NISQ hardware, wait for S-NISQ devices, or continue with classical simulation.

The NISQ path offered limited advantage. Variational Quantum Eigensolver algorithms on 100-qubit NISQ devices produced results comparable to classical methods for small molecules but could not scale to the protein systems the team needed without error accumulation destroying the output.

By Q3 2025, the team gained access to a Quantinuum H2 system with 56 logical qubits operating at S-NISQ performance levels. The error correction overhead was still significant — each logical qubit required approximately 40 physical qubits — but the below-threshold error rates meant computations ran to completion without mid-circuit error accumulation that previously corrupted results.

The outcome was not a 10,000x speedup. It was a qualitative shift: the ability to complete computations that NISQ devices could not finish at useful accuracy. That distinction from “interesting but limited” to “actually produces correct output” is the practical meaning of the S-NISQ transition for applied research.

The Takeaway

S-NISQ quantum error correction is not hype and it is not the finish line. It is a precisely defined engineering milestone below-threshold error correction demonstrated on real hardware that removes one of the central scientific objections to the long-term quantum computing roadmap. Google, IBM, and Quantinuum have demonstrated S-NISQ performance on different hardware architectures using different error correction approaches, all in 2024–2025. The field has accelerated faster in those two years than in the previous five.

The bottleneck shifting to human capital — with fewer than 700 QEC specialists worldwide against rapidly growing hardware deployment — is the most underreported constraint in quantum computing strategy today.

The specific action to take: read the Riverlane Quantum Error Correction Report 2025 for the most comprehensive current landscape, and track IBM’s Starling and Google’s post-Willow roadmap for the S-NISQ to FTQC transition timeline. Those two sources, read together, give you the most accurate picture available of where the field actually is.

FAQs

What does S-NISQ stand for in quantum computing?

S-NISQ stands for Scalable Noisy Intermediate-Scale Quantum. It refers to the transitional computing era between the original NISQ phase — where devices operated with high error rates and no practical error correction and full fault-tolerant quantum computing.

S-NISQ devices demonstrate below-threshold error correction, meaning adding more physical qubits improves rather than worsens the logical error rate. Google’s Willow processor and Quantinuum’s H2 system represent current S-NISQ hardware as of 2026.

How is S-NISQ different from regular NISQ quantum computing?

The critical difference is error correction. NISQ devices typically 50 to 1,000 qubits operate with error rates too high for practical error correction algorithms to overcome. Researchers instead use error mitigation: statistical post-processing that reduces noise effects but cannot eliminate them.

S-NISQ devices cross the below-threshold boundary where actual quantum error correction codes surface codes, qLDPC codes, stabilizer codes — produce logical qubits with lower error rates than their physical components. This qualitative shift is what separates the two eras.

Is S-NISQ the same as fault-tolerant quantum computing?

No, and this distinction matters. S-NISQ represents the early phase of practical error correction below-threshold operation with 50 to several hundred logical qubits. Fault-tolerant quantum computing (FTQC) requires millions of physical qubits running full error-corrected algorithms at logical error rates below 0.001%. IBM’s Starling system targeting 200 logical qubits by 2028 and Google’s 1-million-qubit roadmap for 2030 represent the FTQC target. S-NISQ hardware exists today; FTQC does not.

What quantum error correction techniques does S-NISQ use?

Three main approaches dominate S-NISQ hardware in 2026. Surface codes, implemented on Google’s Willow and similar superconducting platforms, use stabilizer measurements on 2D qubit grids to detect and correct errors. qLDPC codes, adopted by IBM in 2024, encode more logical qubits per physical qubit but require long-range connectivity.

Trapped-ion systems from Quantinuum use native high-fidelity gates that reduce the physical qubit overhead required for below-threshold operation. Each approach reflects the hardware architecture of the company implementing it.

Which companies have achieved S-NISQ performance in 2026?

Google, IBM, and Quantinuum are the three companies with the clearest S-NISQ demonstrations as of early 2026. Google’s Willow processor demonstrated below-threshold surface code error correction with 105 qubits and a 2.14x error reduction per increase in code distance.

Quantinuum demonstrated 50 entangled logical qubits with two-qubit gate fidelity exceeding 98%. IBM’s transition to qLDPC codes positions its Quantum System Two architecture on the S-NISQ trajectory, with the Starling 200-logical-qubit system scheduled for 2028. IonQ and emerging platforms are following with hardware expected to reach S-NISQ benchmarks within 2026.

What is the realistic timeline for useful fault-tolerant quantum computation?

Based on published hardware roadmaps and 2025 progress, fault-tolerant quantum computing for commercially relevant problems sits on a 2030–2035 timeline for most analysts. DARPA’s Quantum Benchmarking Initiative is targeting a $1 billion quantum computer capable of running fault-tolerant algorithms by 2033.

Bill Gates has publicly estimated 3 to 5 years for utility-scale applications a timeline that aligns with the 2028–2030 window if IBM Starling and Google’s roadmap milestones deliver. Breaking public-key cryptography at scale remains a 2035+ scenario given the millions of logical qubits required.